For the past five years I have been researching topics relating to the frontiers of animation: motion graphics, interface design, transmedia and most recently animation and machines (more specifically robots). What started as an attempt to conduct an investigation into how animation is being applied to expressions of androids, ended opening up a new research horizon. My interest was sparked by a video I watched on the research conducted by Hiroshi Ishiguro, director of the Intelligent Robotics Laboratory at Osaka University, who has built lifelike robots so real they seem believably upset when Mr Ishiguro makes fun of them.

As I was overcome by uncanny feelings towards what I had just seen on Youtube, the algorithm’s “next-on” suggestions led me to videos with titles like The 10 Best Social Robots You Can Buy, that are compilations of professionally produced commercials demoing social robots like Aido, Jibo, Tapia and Bigi. Even though the actual interaction with these characters may not be as seamless as it is portrayed in these videos, they present friendly ways to interact with robots. This lead me to look further into these “creatures”: the social robots.

Social robots can perform a variety of tasks such as sending emails, online shopping, airline reservations, scheduling appointments and many other tasks we would conduct on a smartphone or computer. Means of communication with humans include synthesized voice, speech and optical recognition, which in some cases are aided by animated characters on a screen. A number of social robots have actually become available to retail consumers, ranging from high-end realistic androids to cartoon-like creatures. All these robots interact with human beings emulating our senses such as hearing, speaking, seeing and conveying emotions through motion and facial expressions. Social robot developers have realized the importance of using animation techniques in order to improve human-robot interaction by applying animation principles as a mean to facilitate man-machine communication.

Van Breemen from Philips Research proposes to “apply principles of traditional animation to make the robot’s behavior better understandable” (van Breemen, 2004). Tiago Ribeiro and Ana Paiva from INESC-ID in Portugal suggest adapting Disney’s practices and principles of animation to robotics: “Our work shows that applying animation principles to robots is beneficial for human understanding of the robots’ emotions” (Ribeiro, Paiva, 2012). In 2016, at the ACM/IEEE Human-Robot Interaction conference, Balit, Vaufreydaz and Reignier from Université Grenoble-Alpes presented an open-source robot animation software allowing developers to animate robots as a medium, and they stated:

As previous research has shown, 3D animation techniques are of great use to animate a robot. However, most robots don’t benefit from an animation tool and therefore from animation artists knowledge (Balit, et al., 2016).

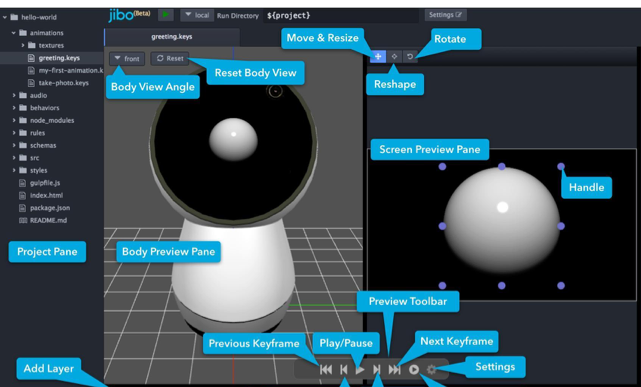

Attention should be given to Jibo, a personal assistant robot evolved out of research by social robotics pioneer Cynthia Breazeal at MITs Media Laboratory – Personal Robots Group, through a crowdfunding campaign. The robot, that was released in 2017, delivers a highly expressive experience, even though the range of animation possibilities are limited by its solid cylindrical body and single eyeball.

The robot’s animation system is accessible via an open-source application allowing users and third party developers to customize Jibo’s actions, dialogues, gestures and expressions by choreographing its movements, as explained on the company’s website:

Jibo’s animation system is responsible for coordinating expressive output across the robot’s entire body, including motors, light ring, and eye graphics. The system supports playback of scripted animations, as well as expressive look-at and orientation behaviors (Developers.jibo.com, 2017).

An additional case study that may interest animation scholars and enthusiasts is the design process for Cozmo by Anki, a company that has taken a step towards integrating animators in the product design team. Anki hired Carlos Baena, who previously worked on animated films Wall-E (2008, by Andrew Stanton) and Toy Story 3 (2010, by Lee Unkrich) at Pixar, to be part of the team that conceived how Cozmo moves and interacts, designing a robot with expressions mediated by an animated LED style eye display. Even though Cozmo looks like a tractor, it acts and moves in a surprisingly friendly way.

During the design process Baena proposed that Cozmo should have a personality, and showed that there was no need for it to appear human in order to achieve this objective. Cozmo’s two digital “blue eyes” display function as his face. In an interview to Fast Company magazine, Baena states: “You don’t need a lot of features to have characters portray emotion” (Salter, 2016).

Judging by these examples, it is not far fetched to speculate that in the near future your children’s favorite cartoon character will gain physical embodiment as a robot and will be reading bedtime stories to them, Remy the mouse in Ratatouille may be helping you out in the kitchen, and Minions will be helping you with Google Maps on your dashboard.

References

Balit, E., Vaufreydaz, D., Reignier, P. (2016). Integrating Animation Artists into the Animation Design of Social Robots: An Open-Source Robot Animation Software. ACM/IEEE Human-Robot Interaction 2016, Christchurch, New Zealand.

Hislop, M. (2016). “Anki Cozmo robotic companion“, Designboom magazine [online]. Available at: https://www.designboom.com/technology/anki-cozmo-07-07-2016/ [Accessed 16 Aug. 2017].

Ribeiro, T., Paiva, A. (2012). The Illusion of Robotic Life Principles and Practices of Animation for Robots. INESC-ID, Universidade Técnica de Lisboa, Portugal.

Salter, C. (2016). How Anki Created A Pixar-Inspired, AI-Powered Toy Robot That Feels.

Inovation by design, Jun 2016, Fast Company. [online] Available at: https://www.fastcompany.com/3061276/meet-cozmo-the-pixar-inspired-ai-powered-robot-that-feels [Accessed 18 Jul. 2018].

van Breemen, A.J.N. (2004). Bringing Robots to Life: Applying Principles of Animation to Robots, Philips Research, Eindhoven, Presented at CHI 2004, Vienna.

João Paulo Schlittler is a researcher and designer working in film, television and digital media since 1987. He holds a PhD in Design from Universidade de São Paulo, a Masters Degree in Interactive Telecommunications from NYU and a B.A. in Architecture from Universidade de São Paulo. Since 2004 he is a Professor at the School of Communication and Arts of Universidade de São Paulo, where he is the director of the animation and motion design research lab ZOOTROPO. João Paulo headed the design department at TV Cultura in Brazil, was the Director of Broadcast and Interactive Design at Discovery Communications and Director of Graphics and Visual Effects at HBO.