The digital humans are among us. In February of 2021, Epic Games, developers of the Unreal Engine, a leading video game software engine, announced the impending launch of its MetaHuman Creator application. “Creating truly convincing digital humans is hard,” acknowledged Epic’s press release (Unreal 2021), while claiming that the MetaHuman Creator could provide real-time generation of high-fidelity digital characters within minutes, drawing from an available library of pre-set faces, 30 available hairstyles, 18 body types, and with the ability to further custom sculpt the avatars. By April an early access version of the creator app was available and seemed to deliver on Epic’s early promises – near photo-realistic digital characters created with adjustable facial features, skin complexion, make-up, teeth, and hair (see Figure 1). A legion of MetaHumans ready to populate a developing Metaverse – free to travel across gaming environments, VR experiences, and animated films, but decisively tethered to the Unreal platform. Perhaps most significant in terms of their actual use, MetaHumans emerge fully “rigged” for animation (embedded with a series of manipulation control points) and available for live linking to a number of performance capture applications.

While the character generation capabilities of Epic’s platform are impressive, it is in the sphere of motion capture automation (facial automation in particular) where the disruptive potential of these recent advances in “digital humanity” becomes most apparent. As Eric Furie from USC’s Motion Capture Program confirms, “Facials still remain the Holy Grail of performance capture” (Furie in Sito 2013: 213). Tellingly, two smaller performance capture technology companies recently acquired by Epic were instrumental in the development of the MetaHuman project: 3Lateral, specializing in 3D and 4D scanning technology, and Cubic Motion, focused on the use of computer vision technologies for the automation of facial animation. The Epic, 3Lateral, and Cubic Motion partnership first caused an industry stir when, at the 2018 Game Developers Conference, they unveiled “Siren,” a photo-realistic avatar billed as “the first digital human,” rendered in real-time and linked to the live performance of an actor on stage (Cubic Motion 2018). It is a display that would have been unthinkable in a pre-AI era of performance capture – Cubic Motion’s computer vision and machine learning algorithms enable the tracking and transfer of some 200 facial features, as subtle as a single eye wrinkle, without the need for physical markers (gone in this case are the iconic dots positioned on an actor’s face that we have come to associate with processes of motion capture).

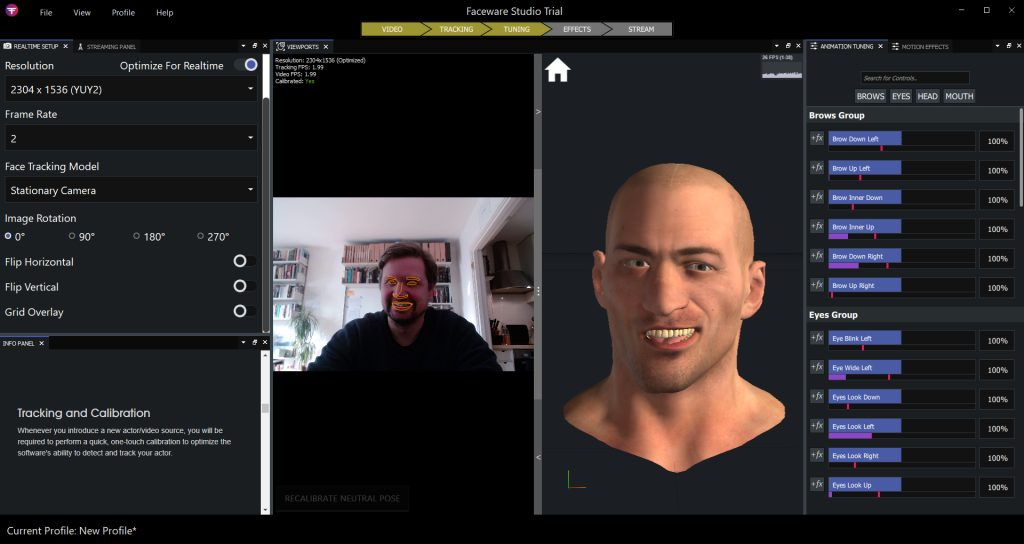

This kind of machine-learning assisted facial automation is becoming increasingly prevalent within the FX and gaming industries and the early release of the MetaHuman Creator comes already enabled for real-time motion capture. In addition to its own in-house expertise, Epic has been partnering on the MetaHumans project with companies such as Faceware and Digital Domain that also specialize in the automation of facial animation using AI-based approaches (see Figure 2). The latter company is perhaps best known for animating the Marvel villain Thanos in feature films such as Avengers: Infinity Wars (US 2018). Digital Domain employs its own facial capture system entitled Masquerade, the latest iteration of which now employs machine learning to automate a process of facial tracking that previously involved many hours of manual work. Darren Hendler, head of the company’s Digital Humans Group, boasts, “This has completely revolutionized our delivery process, allowing us to turn around assets faster than anybody else. In the past, 50 hours of footage meant 600,000 hours of artist time. Today, Masquerade cuts that by 95%” (Seymour 2020).

Commenting on an earlier boom in performance capture technology, epitomized by films like the Lord of the Rings trilogy (NZ/US 2001–2003) and Avatar (US 2009), Mihaela Mihailova thoughtfully dissects how discussions of these innovations often involve “the systematic erasure of the animator’s contribution,” including hours of painstaking facial rigging or keyframing work (Mihailova 2016: 42). Current developments in facial automation threaten to enact an even more literal removal of labor from the process of animation. The goal of at least one segment of the industry seems apparent – linking directly and instantaneously a human performer to their rendered digital counterpart, with only technologies of automation as intermediary. In other words, animation without animators. Of course, the history of animation has always also been a history of the tools of production, one involving a perpetual process of negotiation between creativity and automation. But the configuration of animation work and animation technology is undoubtedly shifting. Scanning digital assets, preparing training data, supervising machine learning – the labor of animation seems increasingly bound up with feeding a pipeline of automation. The digital humans are among us.

References

Cubic Motion (2018). “How Cubic Motion is achieving new levels of photorealism with digital humans like Siren.” Available at: https://cubicmotion.com/case-studies/siren/ (accessed 20 March 2022).

Mihailova M. (2016) “Collaboration without Representation: Labor Issues in Motion and Performance Capture.” Animation. 11(1): 40-58.

Seymour, M. (2020) “Masquerade at Digital Domain.” fxguide. Available at: https://www.fxguide.com/fxfeatured/masquerade-at-digital-domain/?highlight=masquerade (accessed 20 March 2022).

Sito, T. (2013) Moving Innovation: A History of Computer Animation. Cambridge, MA: MIT Press.

Unreal Engine (2021) “A sneak peek at MetaHuman Creator: high-fidelity digital humans made easy.” Available at: https://www.unrealengine.com/en-US/blog/a-sneak-peek-at-metahuman-creator-high-fidelity-digital-humans-made-easy (accessed 20 March 2022).

Joel McKim is Senior Lecturer in Digital Media and Culture and Director of the Vasari Research Centre for Art and Technology at Birkbeck, University of London.

Great article and very well written! Still relevant with all of the current developments in facial motion solutions and Metahumans.