When designing sound for animation, especially when you have some latitude in interpreting the visuals against your fully designed soundscape, there can be a tendency on trying to match up everything you see on screen with a direct sonic match. This immediate synchronization of sound against visual event can lead to a very artificial experience, especially if there is no other sonic content in the animation – this is often referred to as a ‘mickey-mousing’ effect.

Direct synchronization between sound and vision can work. The short film Dots (1940) by Norman McLaren shows a complete match of sound and vision, in that he painted shapes on both the visual and audio sections of the film track. In his film, you can see shapes appearing and developing, while the sounds you hear are a direct correlation to the visual action. Oskar Fischinger, known for his work in visual music, also experimented with direct synchronization converting visual objects into sound, notably in his synthetic sound machine (see Hyde, 2013). However, these works were designed with this direct synchronization in mind, and for most animation, synchronization alone gives a very unnatural feel to the film.

In contrast to the idea of synchronization, Michel Chion noted the phenomenon of synchresis, “the spontaneous and irresistible weld produced between a particular auditory phenomenon and visual phenomenon when they occur at the same time” (Chion, 1994). If you run a recording of music against any piece of film, there will be moments where the music seems to catch and elevate the action you can see. Synchresis differs from synchronization in that the sound and vision can move in separate directions, meeting every so often to emphasize the aspect of the story that is key to that moment. Not everything has to match, and the freedom of movement in both vision and sound allows a reasonable amount of creativity for both the animator and sound designer.

Normally, when a sound designer is working on a soundtrack, synchresis is actively planned for. While the moments that match can be manipulated, there are also moments where the sound and vision do not merge. This can be a deliberate choice, for example as a compositional tool to develop themes in both perceptual streams, which may later combine to form a powerful synchretic fusion, the impact of which is greater due to the anticipation built up in the separate themes.

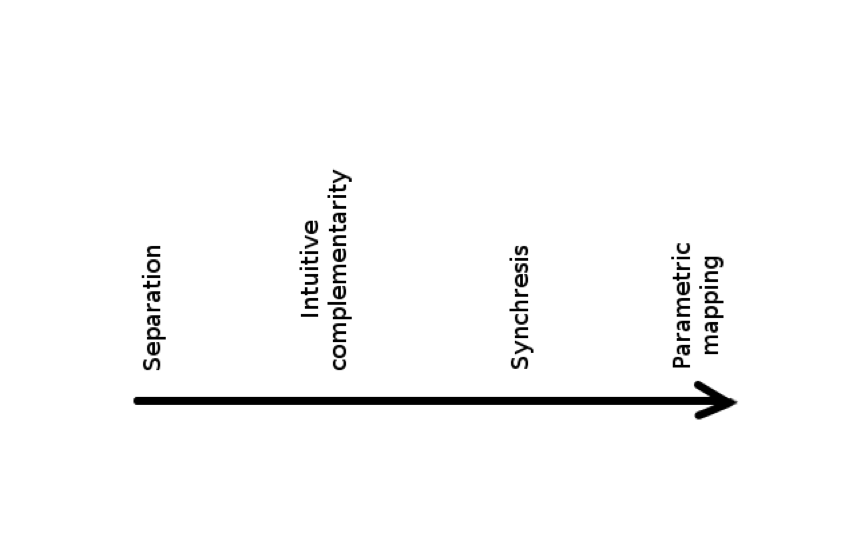

The electroacoustic composer and animator Diego Garro posited a continuum of audiovisual mapping (Garro, 2012) (see Figure 1).

This continuum can be used to place the relationship of the fusion between sound and vision from non-existent at Separation through to totally synchronized at Parametric mapping – separation referring to no discernible connection between the two perceptual streams, while parametric mapping exhibits a very close relationship between sound and vision.

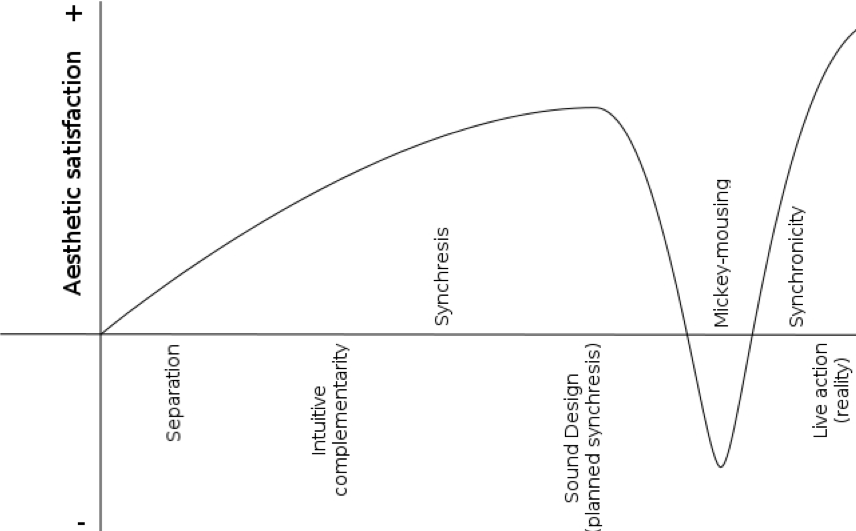

This gives the sound designer an approach to considering the structures of the audiovisual interactions that occur in his piece. However, this continuum does not necessarily also map to the effectiveness of the audiovisual interaction. Referring back to the idea of ‘mickey-mousing’, this can lead to a very unsatisfactory experience. Writing in 1946, the animator Chuck Jones observed: “For some reason, many cartoon musicians are more concerned with exact synchronization or ʻmickey-mousingʼ than with the originality of their contribution or the variety of their arrangement… I have seen a good cartoon ruined by a deadly score” (Jones, 1946).

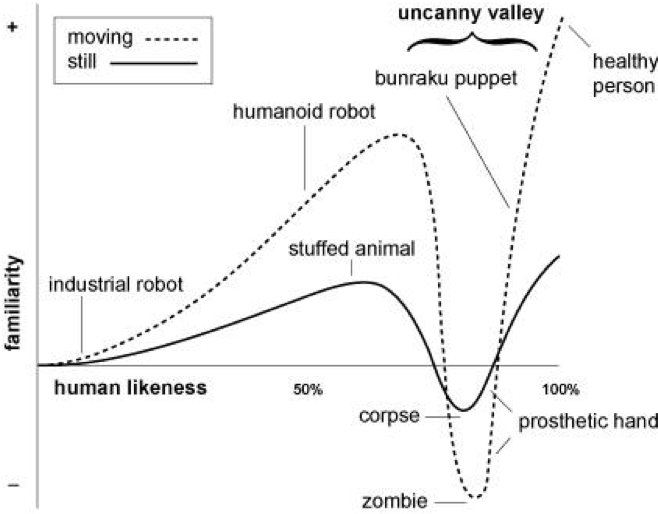

The ʻdipʼ in aesthetic satisfaction that occurs with ʻmickey-mousingʼ can be envisaged as occurring towards the parametric mapping end of his continuum. If we regard aesthetic satisfaction as gaining strength up to this dip, and then returning for full parametric mapping (live-action, or complete synchronicity as can be seen in McLarenʼs Dots), the curve that results is reminiscent of the ʻuncanny valleyʼ envisaged by Miro (1970) (see Figure 2). Miro was examining the human response to objects that resembled human beings or parts of human beings. He noted that as the object started to resemble the human likeness, it became much more comfortable to look at or interact with. A stuffed teddy bear, or a humanoid but identifiably mechanical robot, were reasonably comfortable for humans to deal with, as was a normal healthy human being. However, where the likeness was almost but not quite human-like, the reaction dipped into a negative, uncomfortable zone, which he called the uncanny valley.

The ʻmickey-mousingʼ effect has a similar dip in familiarity, “comfortableness”, or aesthetic satisfaction for the audience, and can cause the viewer to ʻdrop outʼ of the animation.

Working from Garroʼs original continuum and mapping the ʻuncanny valleyʼ effect to account for ʻmickey-mousingʼ, I have proposed the following diagram as a guide to the sound designer when considering how the audience is likely to respond to the connections made between visual images and sound design (see Connor, 2017) (Figure 3).

Other than a true reproduction of a live-action recording, the most aesthetically satisfying experiences for the audience are spontaneous synchresis, planned synchresis, and synchronicity. It follows that for the animator and sound designer work exhibiting these three combinations of sound and vision are likely to be the most effective at capturing and retaining the audience’s interest, although the narrative structure and a coherent visual and sonic palette are also, of course, essential in crafting engaging work.

References

Chion, M. (1994), Audio-Vision: Sound on Screen, New York: Columbia University Press.

Connor, A. (2017), The Audiovisual Object, Ph.D. Thesis, University of Edinburgh. Available at http://hdl.handle.net/1842/26047 [accessed 30/08/2019]

Garro, D. (2012), ‘From Sonic Art to Visual Music: Divergences, Convergences, Intersections’ Organised Sound, 17(02), pp. 103-113.

Hyde, J. (2013), ‘Oskar Fischinger’s Synthetic Sound Machine.’ In: Keefer, C. and Guldemond, J. (eds.), Oskar Fischinger 1900-1967: Experiments in Cinematic Abstraction. Amsterdam/Los Angeles: Eye Filmmuseum; Center for Visual Music.

Jones, C. (1946) ‘Music and the Animated Cartoon’, Hollywood Quarterly, 1(4), pp. 364-370

Mori, M. (1970) The Uncanny Valley. tr. MacDorman, K.F. & Kageki, N., 2012. Available at http://spectrum.ieee.org/automaton/robotics/humanoids/the-uncanny-valley [accessed 30/08/2019]

Dr. Andrew Connor is a Teaching Fellow in Design and Digital Media at the University of Edinburgh. His teaching and current practice center on 3D Animation and Virtual Environments, while his research interests include the fusion of sound and vision in audiovisual compositions, electroacoustic composition, and abstract animation, visual music, and the effect of performance space on audience immersion.

Great article, which sparked some thoughts.

Just one note: there are several typos citing Mori as “Miro”. It would be very interesting if Miro had written about human responses to human-like objects, but he didn’t. ?

Feel free to remove this comment if you wish.